AI in the Arts and Design @ CAA 2020

See photo gallery from the event below.

The CAA 2020 special session on AI in the Arts and Design, sponsored by Leonardo/ISAST, featured an exciting and distinguished group of speakers addressing the topic: Does generative and machine creativity, or AI in the arts and design, represent an evolution of “artistic intelligence” or is it a rupture in the evolution of creative practice yielding new forms and types of authorship? xREZ Director Ruth West and Andres Burbano (Universidad de los Andes) co-chaired the session in Chicago. February 14th was CAA 2020’s “pay as you wish day” for registration, generously sponsored by the Thoma Foundation.

Speakers

Philip Galanter (Texas A&M University)

When should generative AI be considered the author of its own creation versus the human using the AI software? Moving from the normative realm of aesthetics to that of ethics, this presentation considers when humans will be morally obliged to recognize AI’s as ethical agents worthy of rights and due consideration. For example, if someday your AI artist fearfully begs to not be turned off, what should you do?

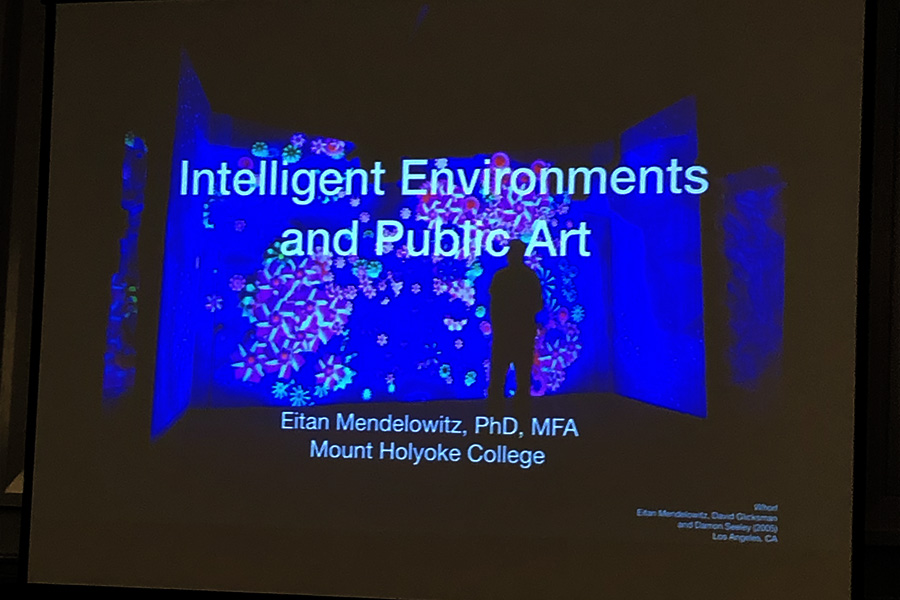

Eitan Mendelowitz (Mount Holyoke College)

As they become smaller and more affordable, sensors, actuators, and computers are increasingly being embedded into our built environment. Recent advances in deep learning allow systems to understand real-time video, audio, and text that was previously opaque to computational analysis. Simultaneously, the accessibility of custom architectural-scale LED displays and the availability of cheap robotics are allowing for new forms of computational output. Using these and related technologies, we are beginning to see the creation of architectural spaces that see, hear, think, and act. While public art has always had the power to shape how individuals relate to their environments, a new generation of artificially intelligent public art encourages people to engage with both their surroundings and each other through situated experiences and embodied interactions.

Meredith Tromble (San Francisco Art Institute)

Could art provide the stimuli for AIs to link complexity of thought with agency? A disembodied intelligence has no agency, as without bodily emotions and physical feedback, it can endlessly consider competing options but lacks (unprogrammed) motivation to choose one. (Some humans with neurological illnesses demonstrate a similarly pathologically indecisive condition). Most current approaches to AI ask “How can machines use massive amounts of data to solve complex problems?” But, as Wired writer Gary Marcus posits, “We could ask, how do children acquire language and come to understand the world, using less power and data than current AI systems do?” Surveying attempts to move in this direction such the European Union’s iCub, a child-like “cognitive humanoid robotic platform,” the paper considers if, in certain conditions, AIs with robotic bodies could develop their own experiences of embodiment through encounters with art. Looking beyond “deep learning” and drawing on work by artists such as Ian Cheng, Lynn Hershman, and Jenna Sutela, this paper envisions what art could do for AI–and what art can do for humans seeking to create the imagined state of “artificial intelligence.”

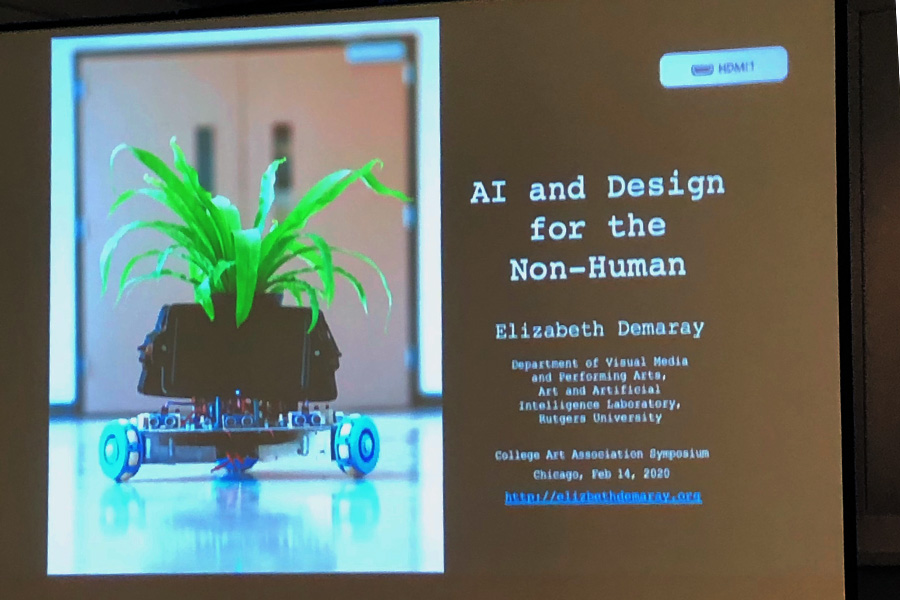

Elizabeth Demaray (Rutgers University)

While much research has focused the way that AI is shaping visual culture, within the space of fine art little attention has been paid to the roll of AI in relation to the non-human. At “AI and Aesthetics in Design for the Non-Human” I would like to present several of my artworks that consider the potential impact of AI on the future of other life forms.

The first of these works is titled “The IndaPlant Project: An Act of Trans-Species Giving.” This project entails creating artificially intelligent robotic supports for houseplants. With the engineer Dr. Qingze Zou, the computer scientist Ahmed Elgammal and the biologist Simeon Kurchoni, I am building moving floraborgs. These entities, which are part plant and part robot, allow potted plants to roam independently in a domestic environment in search of light and water.

The second work is titled “PandoraBird: Identifying the Types of Music that May Be Favored by Our Avian Co-habitants.” It is a site-specific installation that uses a computer vision system to track the musical preferences of local songbirds at specially designed audio-enabled feeding stations. Though no formal studies have been conducted in this area, ample circumstantial evidence shows that many avian species pay attention to human sound. By utilizing a computer vision system that interfaces with an AI system designed for humans, PandoraBird ultimately aims to create a database and interactive software that allows birds to make human-type music choices.

Marian Mazzone (College of Charleston) and Ahmed Elgammal (Rutgers University)

Can AI systems be independently creative and make art? How can artists and machines co-create, and why should they? Various AI researchers have trained machines and generative processes to produce novel and visual interesting works—- devising machinic means of creativity. At the Rutgers Art & Artificial Intelligence Lab we created AICAN (AI-Creative Adversarial Network) tasked with using its creative algorithm to make works of art. Playform was then developed as an easy-to-use program that allows artists to integrate AI technology into their own art making as they choose: as a way to shape data, as an inspiration for new kinds of imagery, as an additional element in multi-media works, etc. All AI systems generate work in degrees of relationship between human and machine: sometimes the machine works rather independently (AICAN), and sometimes as partner with a human artist (Playform). When exhibiting the AICAN works a common question is ‘who is the artist?’, but the answer that the art is AI-derived never results in viewers rejecting the work as art, as our surveys support. There has also been strong interest on the part of artists in exploring AI capabilities. We will demonstrate AICAN and Playform, discuss interactions with the public and the artists, and address questions of authorship and creativity within this new means of making art in the 21st century.

Christiane Paul (The New School)

In the past few years artificial intelligence has moved to the center of technology discussions, and an increasing amount of exhibitions and symposia have been devoted to the subject. The term AI has become increasingly blurry, being applied to a vast range of approaches and applications. The scenarios playing out in popular culture oscillate between the dystopian fear of sentient machines taking over the world and the utopian desire for machine sentience that inspires us with as of yet unknown levels of creativity. The presentation will take my exhibition on AI in art (Kellen Gallery, The New School, February – April 2020) as starting point to give an overview of artistic AI projects addressing creativity. Artistic engagement with AI will be approached according to the different areas or ‘senses’ that are being automated, among them vision (image recognition); speech/voice (personality); language and knowledge (cognitive search) with an emphasis on issues of ethics and bias in machine learning. In his ongoing series “Learning to See,” for example, the artist Memoakten uses machine learning algorithms to explore how AI learns and understands, thereby reflecting on the self-affirming cognitive biases of AI and humans. Artists from Lynn Hershman Leeson and Ken Feingold to Ian Cheng have created chatbots or used commercial bots to probe the potential of conversation, personality, and sentience. Also discussed will be artworks that explore the impact of automation on artistic creativity, from Harold Cohen’s pioneering AI drawing software Aaron (1971-2016) to the current use of Generative Adversarial Networks (GANs).

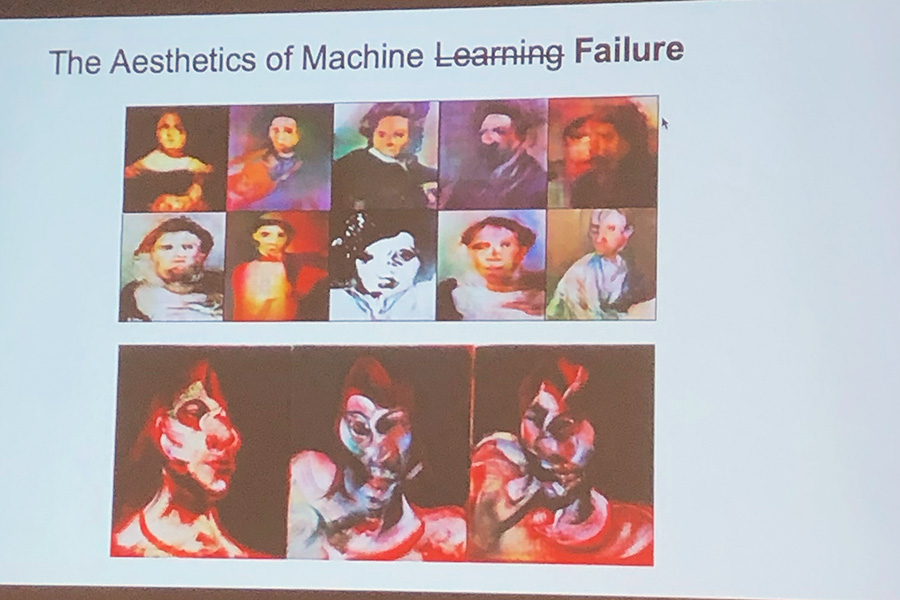

Jeremy Stewart (Rensselaer Polytechnic Institute)

A reappraisal of the artistic process through the lens of A.I./M.L., using the language of back propagation and optimization algorithms as they relate to deep learning and neural networks. This paper analyzes and discusses the artistic process as an instance of Stochastic Gradient Descent (SGD) performing upon the practice of the artist (a connectionist, non-representational model). This paper elaborates and develops upon the language of such algorithms, including learning rate, momentum, and weight decay as these concepts align with the artistic process, and in so doing offers new insights into the way we think machine learning and neural networks while providing some possible avenues for future artistic research.